Jack Jones introduced FAIR-CAM™, the FAIR Controls Analytics Model, to challenge the cybersecurity profession to move beyond its reflexive focus on cybersecurity controls lists and frameworks and take a systems view of cyber defense.

Jack Jones introduced FAIR-CAM™, the FAIR Controls Analytics Model, to challenge the cybersecurity profession to move beyond its reflexive focus on cybersecurity controls lists and frameworks and take a systems view of cyber defense.

Scoring an organization’s cybersecurity program based only on common controls checklists or maturity frameworks “doesn’t provide meaningful insight into which controls are most or least valuable,” Jack wrote.

“And when organizations are unable to reliably understand the value they receive for their investments in risk management, then it’s impossible to know whether they are overspending, underspending, or misallocating their resources.”

Jack’s white paper, An Introduction to the FAIR Controls Analytics Model (FAIR-CAM) (a free FAIR Institute membership required to view – join now), provides a detailed, accessible path to shaking up the conventional mindset in cyber risk management, including these three fresh ways to think about cybersecurity controls:

1. Cybersecurity controls should be assessed based on their risk reduction value.

That may sound obvious, but in current practice, the risk reduction value of controls is just assumed, not measured. FAIR-CAM teaches that we can put a value on risk reduction if we

>>Understand which loss event scenarios a control is relevant to, and

>>How significantly the control affects the frequency or magnitude of loss from those scenarios.

FAIR-CAM also provides units of measurement for control performance (for example, frequency, probability, time, etc.), which is essential in order to quantify their effect on risk.

Get FAIR training approved by the FAIR Institute - learn how.

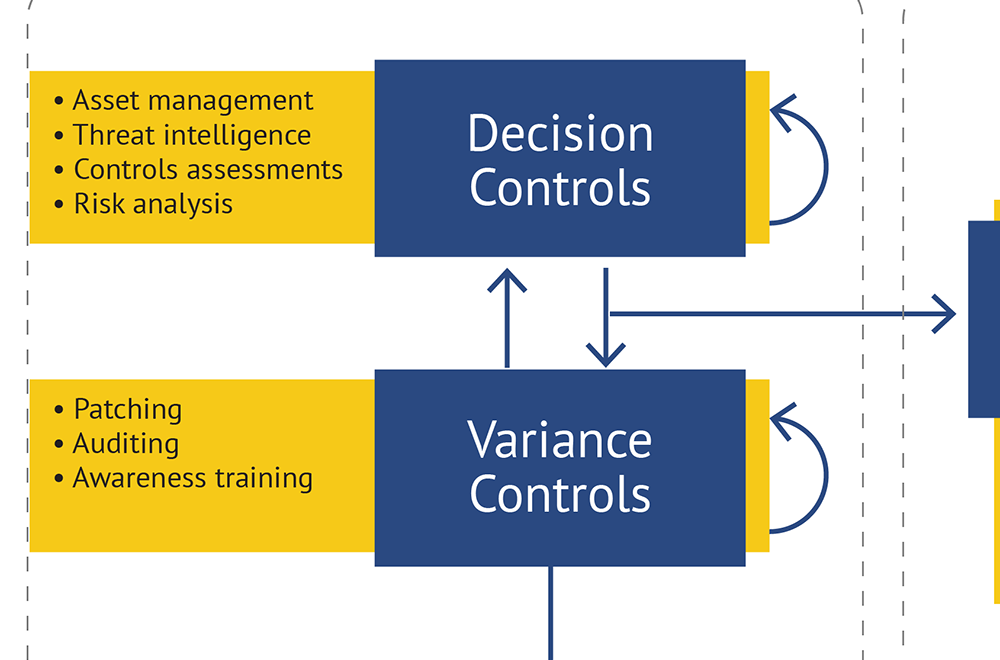

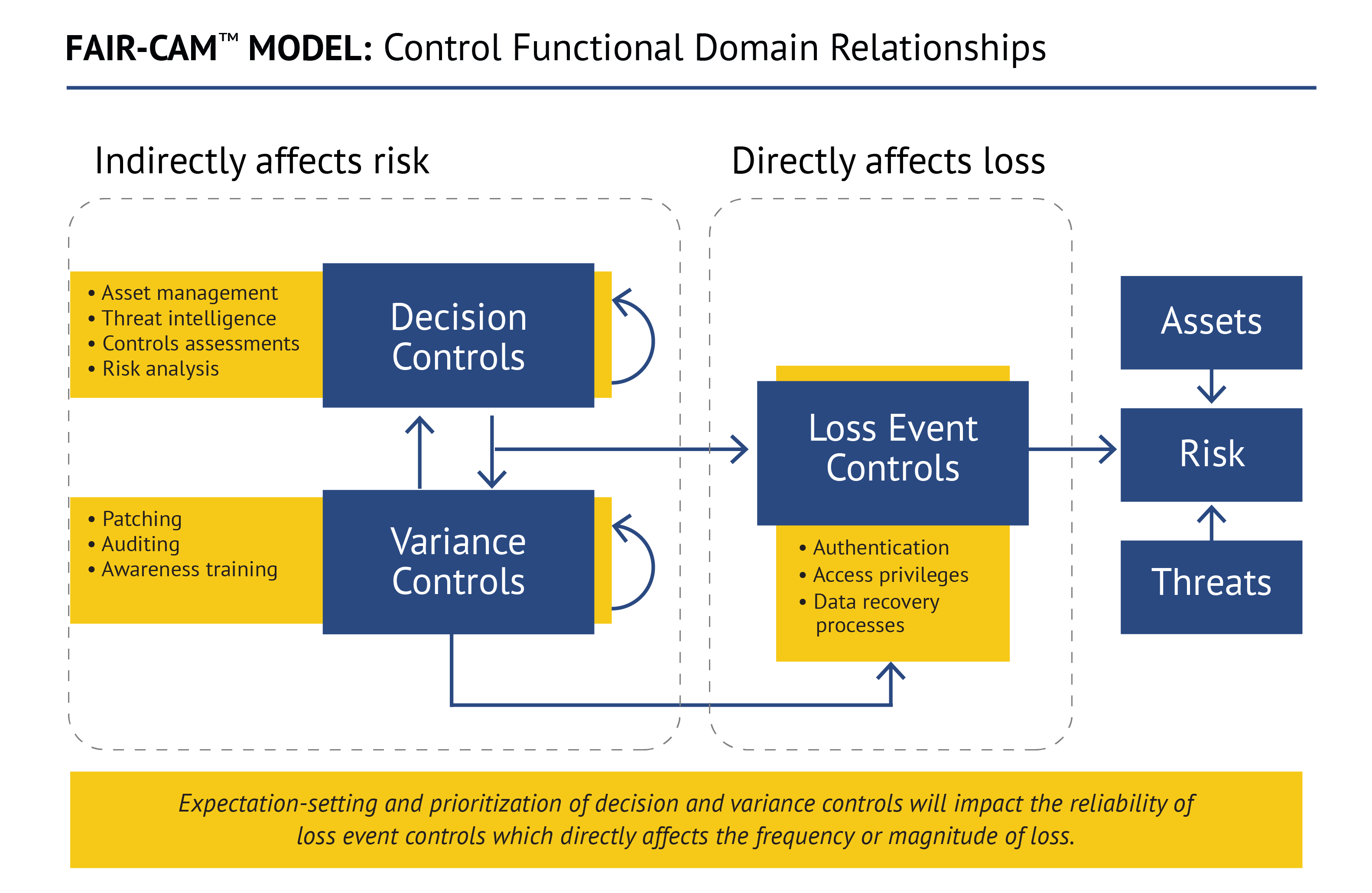

2. Controls have relationships with, and dependencies on, other controls.

The common control frameworks used in cybersecurity are myopic on this point and just assume that more is better. In fact, Jack points out, “weaknesses in some controls can diminish the efficacy of other controls.” Example: how well an organization patches depends on its vulnerability identification, threat intelligence and risk analysis capabilities. Without accounting for these relationships and dependencies, control efficacy and value cannot be accurately measured. You also can’t pin down and treat the root causes behind control failures.

3. A control’s operational performance can be less than its intended performance.

Another key insight and distinction from what’s essentially a set-it-and-forget it spirit of the single focus on frameworks. FAIR-CAM introduces the concept of functional domain categories to distinguish among control functions that affect risk directly (Loss Event Controls), versus those that affect the reliability of controls (Variance Management Controls), versus those that affect decision-making (Decision Support Controls).

The goal is for operational performance to equal intended performance as closely as possible, but that requires Variance Management Controls and Decision Support Controls to minimize the frequency and duration of variance from a control’s intended condition.

As an example, consider a human as a Loss Event Control when it comes to phishing email (to click or not to click, that is the question) and phishing awareness training as a Decision Support Control that can improve their anti-phishing decision-making. Also, as a taste of the complexity that FAIR-CAM was built to handle, the effectiveness of the training can be diminished if threat intelligence (another Decision Support Control) is inadequate because the people being trained wouldn’t be learning about new types of phishing attacks.

“There are feedback loops and connections here that are not explicitly accounted for in our profession today,” Jack has said. “And if we don’t account for it, we can’t measure it. With FAIR-CAM, we can begin to understand controls as a complex system… and we can apply that understanding to use our limited resources more cost-effectively.”