A New Approach to Data for Faster FAIR Quantitative Risk Analysis

“10x the speed and ultimately the effectiveness” of FAIR risk scenario analysis – that was the promise of a forward-looking session at the recent 2021 FAIR Conference led by Ben Gowan and Justin Theriot, data scientists at RiskLens, the technical adviser to the FAIR Institute. This acceleration comes from a new approach to refining the raw material of risk analysis into prepared industry data.

“10x the speed and ultimately the effectiveness” of FAIR risk scenario analysis – that was the promise of a forward-looking session at the recent 2021 FAIR Conference led by Ben Gowan and Justin Theriot, data scientists at RiskLens, the technical adviser to the FAIR Institute. This acceleration comes from a new approach to refining the raw material of risk analysis into prepared industry data.

FAIRCON21

Case Study - Accelerating FAIR Analyses by 10x with Industry Data

Ben Gowan, Data Science Manager, RiskLens

Justin Theriot, Sr. Data Scientist, RiskLens

FAIR Institute members can watch the video of this FAIRCON21 session in the LINK member community. Not a member yet? Join the FAIR Institute now, then sign up for LINK.

Prepared Industry Data Defined

Justin and Ben explained that data for analysis should be

- FAIR prepared to plug into the analysis workflow as frequency and magnitude data – particularly necessary to run a large-scale analytics effort

- Scope aware to account for variation across risk scenarios as you fill in the FAIR factors. For instance, a refined look at the data shows that whether a threat is malicious or accidental on the left side of the FAIR ontology determines the magnitude of impact on the right side.

- Domain and distribution appropriate. Losses should be modeled in a distribution; a simple method of reckoning loss such as per-record cost in a breach isn’t valid. Similarly, data needs adjustment for economic factors such as inflation.

Justin gave a deeper look at data refinement at RiskLens, showing how raw data from thousands of loss events gets filtered by industry, geography, data type, threat actors, threat type and other categories. As an example of the subtle differences among industries, for instance, Justin noted that

- In finance and insurance, 13% of all data breaches occurred from malicious intent from an external actor.

- In healthcare, 20% of all data breaches occurred from malicious intent from an internal actor.

(Justin presented an extensive white paper on RiskLens data science research at the 2021 SIRACon. RiskLens incorporates the research in its product as data helpers, loss tables and contact packs including industry-specific data and scenarios ready for analysis.)

This sophisticated approach, “enables your reporting and scenario scoping to be driven by precise industry estimates that are not just generic assumptions,” Ben added.

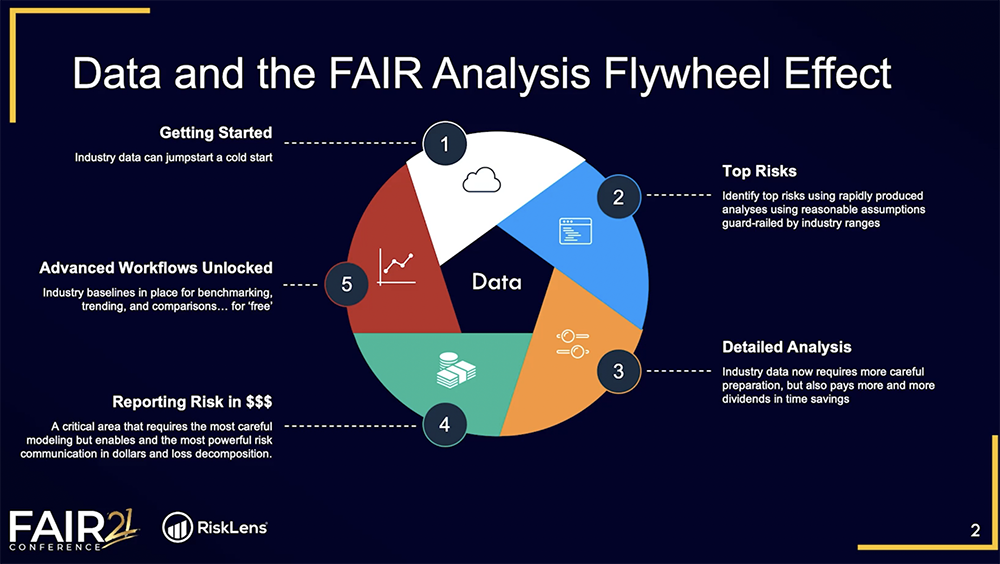

Prepared Industry Data Benefits: The FAIR Analysis Flywheel Effect

Ben and Justin called the practical application of prepared industry data the “flywheel effect.” As Ben said, “a beneficial and reinforcing process is called into place” as FAIR analysts “10x not just speed but the amount of analyses that can be run and the robustness and utility of them.”

The wheel starts spinning from a standing start with industry data that augments or substitutes for in-house data as an organization begins FAIR analysis. A quick win follows, using data plus the RiskLens Rapid Risk Assessment capability to identify top risks, then on through the cycle to advanced, more automated workflows as the organization builds its stores of scenarios and data on the RiskLens platform. “What used to take a week now takes an hour,” Ben said.

Get more detail – see all of the RiskLens presentation at FAIRCON21: Case Study - Accelerating FAIR Analyses by 10x with Industry Data (FAIR Institute membership required).