“From No Data to Drowning in Data – A Reality Check”: Jack Jones Speaks at RSA

“Your organization has data regarding umpteen thousand unpatched vulnerabilities…So what? What decisions need to be made?” FAIR Institute Chairman Jack Jones asked an audience at the RSA Conference this week, in a talk that was a mini-master class about critical thinking in information security at a time when CISOs and cyber risk analysts have an ocean of data pouring in from threat intel, pen testing, audit reports, industry surveys and more sources.

“Your organization has data regarding umpteen thousand unpatched vulnerabilities…So what? What decisions need to be made?” FAIR Institute Chairman Jack Jones asked an audience at the RSA Conference this week, in a talk that was a mini-master class about critical thinking in information security at a time when CISOs and cyber risk analysts have an ocean of data pouring in from threat intel, pen testing, audit reports, industry surveys and more sources.

Jack’s first advice was about appetite control: Stop yourself and colleagues from saying “We don’t have enough data” – there will always be uncertainty, you just have to know how to handle it in cyber risk analysis.

And the first step in that direction, Jack said, is to understand that there’s raw data (coming in from telemetry scans) and then there’s the interpreted data – but that’s only as good as the interpreting model it’s run through. Unfortunately, most organizations are using the “mental model”, as Jack calls it, basically a gut check by security staff.

Better decision making starts with a formal model (like Jack’s creation, Factor Analysis of Information Risk or FAIR) that shows you what data you need – scoping what’s important for answering your question – how to interpret the data and how to make clear your assumptions (so they might be challenged).

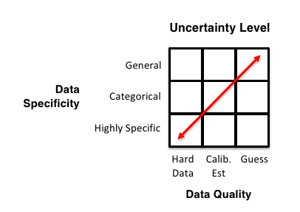

For evaluating data sources, Jack offered this simple chart, showing uncertainty rising as, as data moves from the highly specific to the general and from hard data to guesswork. But Jack strongly advises not letting the perfect get in the way of the good – that a relatively few data points from a solid subject matter expert in your organization, run through calibrated estimation and expressed as a range (using, for instance, a Monte Carlo engine) is actually a great way to turn data into decision support.

For evaluating data sources, Jack offered this simple chart, showing uncertainty rising as, as data moves from the highly specific to the general and from hard data to guesswork. But Jack strongly advises not letting the perfect get in the way of the good – that a relatively few data points from a solid subject matter expert in your organization, run through calibrated estimation and expressed as a range (using, for instance, a Monte Carlo engine) is actually a great way to turn data into decision support.

Jack left the audience with a warning and an action plan.

The warning: “Risk quantification is becoming a bigger deal every day, which means vendors are climbing onboard in their marketing.” Any tool that claims to score risk is using a model – ask what’s the model, is it open source and available for scrutiny (like FAIR)?

And the action plan:

“In the first three months following this presentation you should:

- Identify your organization’s most important assets

- Triage the data quality for those assets

- Become trained in calibrated estimation

“Within six months you should:

- Adopt or define a risk model for your organization (e.g., FAIR)

- Evaluate the models your vendor products use to do risk/severity ‘scoring’

- Evolve your security metrics to become risk metrics (add the so what)”