Jack Jones Talks Cyber Risk Measurement Best Practices at RSA Conference 2023

At RSAC23 this week, FAIR Institute Chairman Jack Jones challenged an audience of 400 in two seminars to move beyond today’s common cyber risk measurement practices that don’t reliably measure risk and re-focus on some basic techniques advanced in Factor Analysis of Information Risk (FAIR™).

At RSAC23 this week, FAIR Institute Chairman Jack Jones challenged an audience of 400 in two seminars to move beyond today’s common cyber risk measurement practices that don’t reliably measure risk and re-focus on some basic techniques advanced in Factor Analysis of Information Risk (FAIR™).

Cutting to the heart of the problem, Jack said, “We exist as a profession to help our organizations manage the frequency and magnitude of loss event scenarios. Today’s common risk measurement practices do not support that objective” – specifically use of control frameworks like NIST CSF or maturity models like C2M2 as stand-ins for true risk measurement.

Done right, cyber risk analysis should deliver results that enable prioritization of cybersecurity projects based on cost-benefit analysis, as well as communicating risk in the business terms that the organization understands, Jack said. He outlined three requirements to hit that level.

3 Requirements for Best Cyber Risk Measurement Practices

1. Clarity on what’s to be measured

1. Clarity on what’s to be measured“Without clear scoping, the odds of measuring risk accurately are much lower, regardless of whether you’re doing qualitative or quantitative measurement,” he said.

2. An accurate, open model for measurementAll risk measurement (that is, measurement of loss event frequency and magnitude) runs on models and all models involve assumptions – but only open models such as FAIR or NIST 800-30 enable us to understand, challenge and accept (or not) those assumptions, Jack said. The mental models behind subjective judgements made by risk analysts or closed, proprietary models inside risk analytics software don’t meet that standard.

Download now: Understanding Cyber Risk Quantification: A Buyer’s Guide by Jack Jones

FAIR Institute Contributing Membership required to download. Join now!

3. Data, accounting for uncertainty

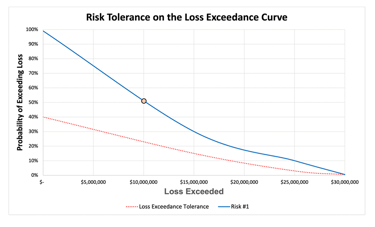

Jack handled one of the common objections to quantitative risk management: we don’t have sufficient data. “Data scarcity is never a legitimate argument for not doing quantitative risk measurement,” Jack argued. “There are well-established methods for dealing with sparse data. You just need to faithfully represent uncertainty in your inputs and outputs.” With Monte Carlo simulations, FAIR analysis generates analysis results in ranges and distributions across various confidence levels (see the chart).

Jack handled one of the common objections to quantitative risk management: we don’t have sufficient data. “Data scarcity is never a legitimate argument for not doing quantitative risk measurement,” Jack argued. “There are well-established methods for dealing with sparse data. You just need to faithfully represent uncertainty in your inputs and outputs.” With Monte Carlo simulations, FAIR analysis generates analysis results in ranges and distributions across various confidence levels (see the chart).

Beyond data, Jack acknowledged some other concerns about quantitative risk measurement – it does require training to break old habits and adjust to a new paradigm and it, in effect, increases the costs of risk measurement in extra effort to gather data and research the business problems you are trying to solve. But those increased costs of measurement are made up for in fewer wasted resources and better focus, which also translates into lower probabilities of “very bad days” for organizations.

The “Siren Song of Automation” for Cyber Risk Measurement

Is there an easy button? Jack concluded with thoughts on the “siren song of automation” for risk analytics. The good news: “Better loss magnitude data is becoming available.” The not-so-good news: “Publicly available loss data is incomplete, threat data is very easy to misinterpret/misapply, controls data is a mess.”

Jack is working on the controls problem with his FAIR Controls Analytics Model (FAIR-CAM™) that enables empirical measurement of the effectiveness of individual controls and controls systems. But for now, “automated cyber risk measurement is incredibly easy to screw up and when it’s screwed up, all you’ve done is automate poor decision-making.”