Estimating unknowns

We often run into the problem of estimating a number about which we seemingly have no idea. For example, how many severe defects probably remain undiscovered in software that is now being deployed to production? The answers I have gotten to this question have been:

We often run into the problem of estimating a number about which we seemingly have no idea. For example, how many severe defects probably remain undiscovered in software that is now being deployed to production? The answers I have gotten to this question have been:

(a) “None, because QA would already have found them and we fixed them.”

(b) “We cannot know until we deploy to production and wait 30 days for new bug reports.”

(c) “I have no earthly idea.”

Surely we can do better than this!

Using ranges

We can put some reasonable and often useful bounds on estimates of highly uncertain numbers using order-of-magnitude thinking. I have used this technique on my own estimates, including for information risk, and in querying colleagues for many years and usually found the results to be illuminating and useful. You only need at least some familiarity with the subject. (There are problems, of course, about which we may have no earthly idea, such as the number of neutrinos passing through our body in one second. It’s huge!)

Example

Let’s consider the number of pages in some random edition of the Christian Bible. Which edition hardly matters, as we shall see. The first step is to take an impossibly low number and an impossibly high number to bracket the range. It’s easy if we limit ourselves to powers of 10. Each power of 10 is one order of magnitude.

Could the Bible be as short at one page? Certainly not. We know it is a rather hefty book. Ten pages? No. A hundred? Again, no. A thousand? Well, I would be unwilling to bet that it’s more than a thousand pages.

How about the other end? Could it be a million pages? No. A hundred thousand? No. Ten thousand? No – that would be at least 4 or 5 very big books, and we know it’s only one book. A thousand? Again I am unwilling to bet it’s less than a thousand.

So we already know that some edition of the Bible is almost certainly between 100 and 10,000 pages. We may now feel, based on this preliminary ranging and other experience, that the answer is in the neighborhood of 1000 pages. So can we narrow the range a bit more?

Instead of limiting ourselves to powers of 10, let’s tighten it up a bit to a half a power of 10, that is, the square root of 10. We’ll use 3 for convenience.

Now, do we think our Bible is more than 300 pages? Yes. We are pretty confident it is several hundred pages. Is it less than 3000? Again, yes. It’s long but not that long. So we have succeeded in tightening the range from two orders of magnitude, 100 to 10,000, to one order of magnitude. Progress!

We could stop here, feeling that we now have enough information for whatever the purpose is. (You need to know how much precision you need. This exercise can help you think about that.)

Or we could try to narrow it further. Moving from powers of 10 to powers of the square root of 10 (roughly 3), we could try powers of 2 – “binary orders of magnitude” – and so potentially narrow the range to 500 to 2000 pages. Of course you can go as far as you like with this procedure, until you are uncomfortable with narrowing the range any further.

A useful degree of precision

This procedure is quick and often yields useful insights to probable magnitude, and to the extent of our uncertainty. It is surprising how often the result is “good enough.” And it may quickly guide us to which among several highly uncertain numbers it is worth the effort to research more carefully. As Douglas Hubbard says, you know more than you think you do, what you do know is often good enough, and it is usually only one or two numbers among many that are worth buying more precision about.

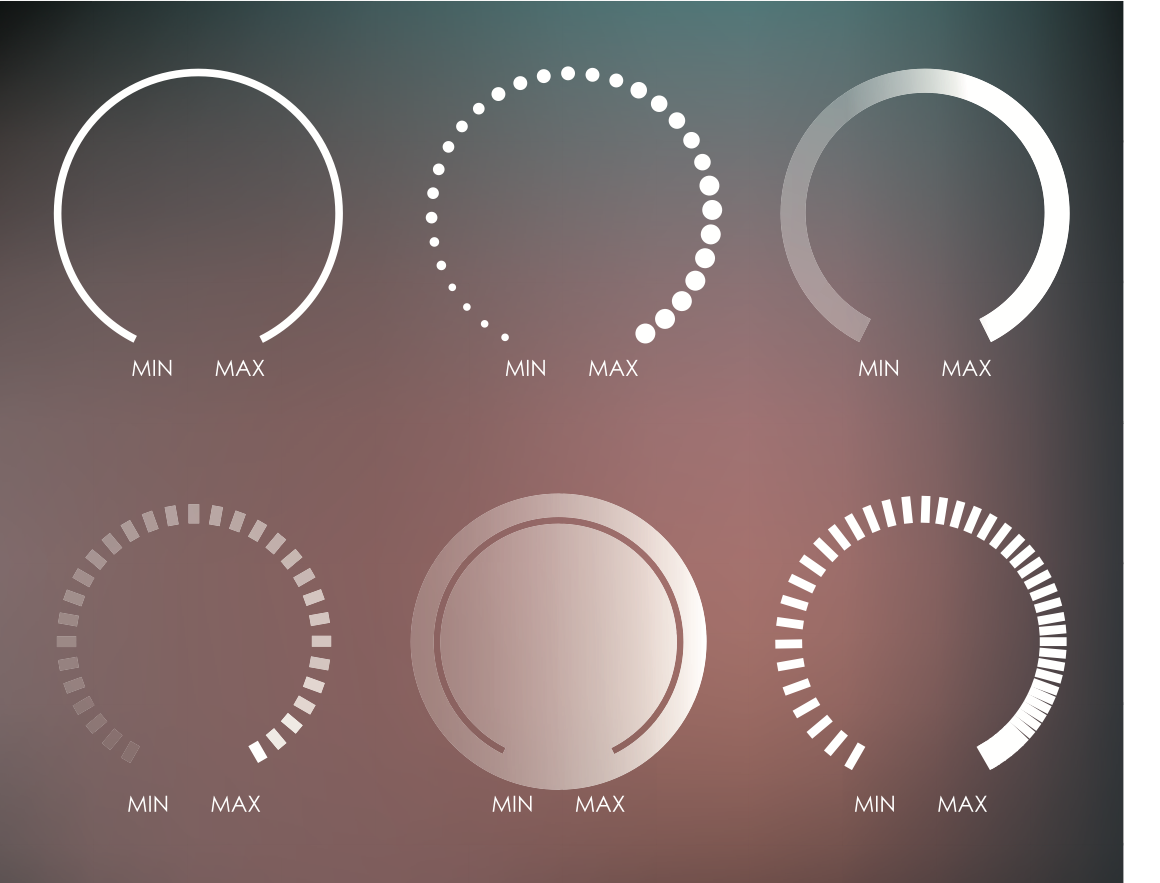

Post script: This method is inspired by the scale knobs on many kinds of electronic test equipment, which often have to accommodate huge ranges. A voltmeter may need to measure from millivolts or microvolts to 1000 volts – 6 to 9 orders of magnitude. They have range settings using the 1-2-5-10 scheme, for 1, 2, 5 and 10 millivolts of sensitivity, and so on up the scale. A useful way of thinking!

.png)