Skeptics about the FAIR model love to scoff at quantitative risk analysis and dismiss it as mere “guesswork.” I have encountered this assertion several times while conducting analyses and I welcome the challenge each time; I view it as an invitation to a discussion.

Skeptics about the FAIR model love to scoff at quantitative risk analysis and dismiss it as mere “guesswork.” I have encountered this assertion several times while conducting analyses and I welcome the challenge each time; I view it as an invitation to a discussion.

Generally, it is during the data collection or the results phase of the risk analysis process that the buzzwords “guesswork” or “guessing” are voiced. The conversation often unfolds somewhere along these lines:

“This is all just guesswork.” – Skeptic

“Hmm, that is a FAIR concern. Let’s step back a moment and reflect on the process... We’ve engaged XYZ Subject Matter Experts (SMEs) to ask how often threats are trying to harm asset A, and evaluated the controls around asset A to determine how likely they will be able to overcome the controls and successfully cause harm. Each range is supported by a rationale that documents our assumptions as well as any industry references, if applicable. Do you think that was a beneficial exercise?” – Believer (a.k.a. Me)

“Yes, but… it is still just guessing.” – Skeptic

“OK. Let me ask a similar question. Have you ever engaged XYZ business SMEs to gather estimates for lost revenue, the number of people who would be involved in responding to an event, how long they would spend responding, crisis communication costs, etc.?” – Me

“No.” – Skeptic

“Wait. Then how are you selecting a risk rating now?” – Me

Here, the Skeptic usually musters a small grin or gives a nervous chuckle, and the “guesswork” discussion ends. Then, the conversation shifts toward focusing on the ranges, rationale and results. I always enjoy this moment, because it demonstrates how FAIR drives toward objectivity in analyses. Having set the scene, let me delve into subjectivity vs. objectivity.

The Subjective vs. the Objective in Cyber Risk Analytics

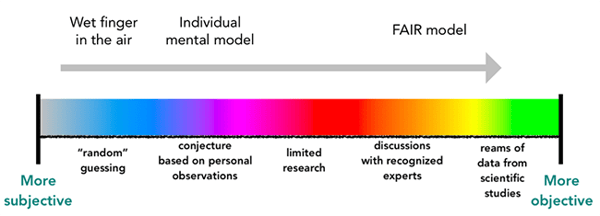

In FAIR, subjectivity and objectivity are not viewed as binary concepts. Instead, they exist on a spectrum.

At a high level, the subjectivity end of the spectrum is more feeling based. On this side of the spectrum, it is common for an analyst to use the “wet finger in the air” approach or his or her own mental (internal) model to evaluate risk and assign high/medium/low ratings. In contrast, the objective end of the spectrum is facts based. On this side, data is collected, documented and measured. A great way to move closer to this end of the spectrum is to have a model to use as an external frame of reference.

The FAIR model provides structure and an external frame of reference that helps foster a logical and consistent evaluation of risk. Also, the process of working through the FAIR model helps air out assumptions which otherwise may have been left unstated. During this process, assumptions move from the mind of the analyst to the written rationale. And, trust me, it is better to debate an assumption that has been clearly identified and articulated rather than debate or assume how someone else feels or what someone else thinks about something.

The ranges gathered during discussions with recognized experts are documented. Over time, the ranges can be refined when additional data is gathered. Through the iterative process, the ranges and the subsequent results move the analysis farther along in the spectrum toward objectivity. Here, the path to objectivity becomes brighter with each iteration.

So, go forth and drive towards objectivity with the FAIR model! Or, just scoff at quantitative analysis and call it guesswork, though if you are still a skeptic, I’d recommend reading Jack Jones’ excellent blog post Is Cyber Risk Measurement Just “Guessing”? on this topic. But really, I would encourage trying quantitative analysis with FAIR as a means of moving beyond subjectivity towards objectivity.