With all the time and money that infosec professionals invest in controls – implementing, patching, auditing, policy promulgation, etc. – you’d think they would be driving control stacks like finely-tuned machines. Yet every post-breach investigation finds that the organization couldn’t recognize and maintain the controls that mattered most.

With all the time and money that infosec professionals invest in controls – implementing, patching, auditing, policy promulgation, etc. – you’d think they would be driving control stacks like finely-tuned machines. Yet every post-breach investigation finds that the organization couldn’t recognize and maintain the controls that mattered most.

What’s the problem? Cybersecurity staff maybe be able to give you a ready answer on a “maturity score” showing how well they have followed the recommendations of controls frameworks, but they likely don’t have visibility into some critical success metrics. For instance…

1. We don’t know how effective our controls are at reducing cyber risk

Control assessment practices typically rate control conditions with numbers (1-5) or colors (red, yellow, green) but these are subjective values, not units of measurement like percentages or dollars. As a result, they can’t be reliably translated into risk reduction.

Following the principles of FAIR™ (Factor Analysis of Information Risk), risk is the “probable magnitude and probable frequency of future loss”, and the effectiveness of controls should be measurable in the tangible terms of reduction in frequency and magnitude of loss.

Online FAIR training through the FAIR Institute - learn more

2. We don’t know how any control interacts with our other controls

An organization’s controls form an ecosystem, with every control in an upstream or downstream relationship with other controls. Take the “human control” deciding to click or not on a phishing email; that person’s effectiveness as a control is influenced by the effectiveness of the upstream controls, awareness training and threat intelligence.

But when a vulnerability scan finds a patch is missing, the severity gets rated as if the control stands alone. The hidden reality: the condition of other controls may make the vulnerability more or less severe.

3. We don’t know how to evaluate current or new control investments

Since we can’t quantitatively measure the effectiveness of controls for risk reduction…well, our whole program is open to question. We can’t meaningfully report on KPIs. We don’t know our most valuable or least valuable control. We can’t tell if an existing control meets its intended performance or, even if operating properly, whether its contribution to risk reduction justifies its cost or replacement with a new control.

FAIR-CAM™ brings the clarity of quantitative cyber risk analysis to controls

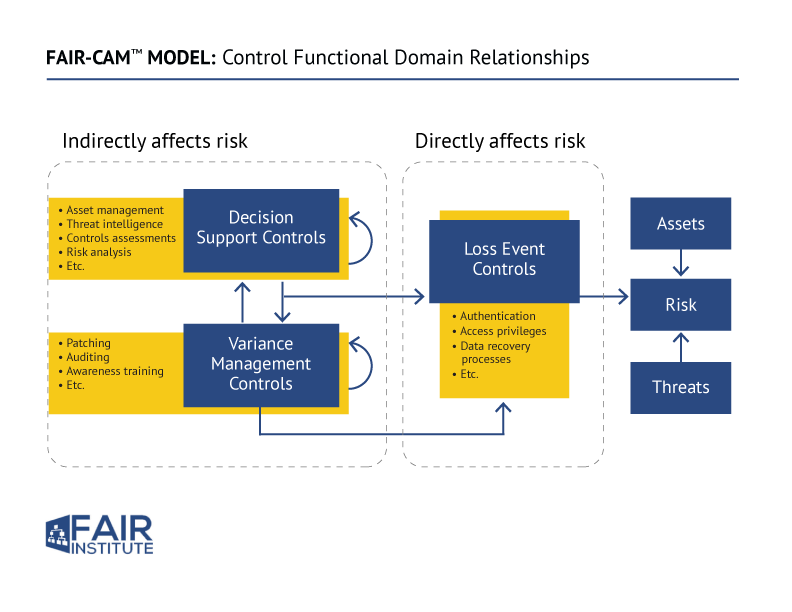

Jack Jones, creator of the FAIR standard for cyber risk quantification, recently released the FAIR Controls Analytics Model (FAIR-CAM) for understanding the effectiveness of controls in risk reduction.

The model

- Categorizes controls by type and function

- Sets them in relation to each other, clarifying their interplay

- Shows the direct and indirect effect of controls on risk

- Assigns units of measurement for control performance enabling a quantitative approach for reliable analysis of the effectiveness of controls and controls systems.

Learn more here:

FAIR Institute membership required – join now

Detailed Description of the FAIR-CAM Standard

Video: Jack Jones Introduces FAIR-CAM at the 2021 FAIR Conference

“If we want to effectively manage a problem space like cybersecurity, we have to account for its complex nature,” Jack Jones says. The simplistic, non-systemic, non-quantitative outlook on controls has been “really a strong contributor to the challenges we face…and why we lose some of the battles we lose to the bad guys.”