A large population of risk professionals are truly gifted. Gifted with the gift of gab, that is. This is because they haven't had any other choice until recently. Think about it: risk professionals can use ordinal scales to “measure” risk. How does slapping a 1,2,3,4,5 label (or “giftwrapping” uncertainty and ambiguity) on impacts and likelihoods qualify as risk “measurement”?

A large population of risk professionals are truly gifted. Gifted with the gift of gab, that is. This is because they haven't had any other choice until recently. Think about it: risk professionals can use ordinal scales to “measure” risk. How does slapping a 1,2,3,4,5 label (or “giftwrapping” uncertainty and ambiguity) on impacts and likelihoods qualify as risk “measurement”?

Does this “measurement” reduce uncertainty? Does this sort of “measurement” inform decision makers with the amount of loss exposure facing the organization?

I want to give a gift to you, dear reader. As a proud Irishwoman who can recognize blarney when I see it, I want to provide a distinction between Risk Measurement and Risk Blarney.

Risk Blarney

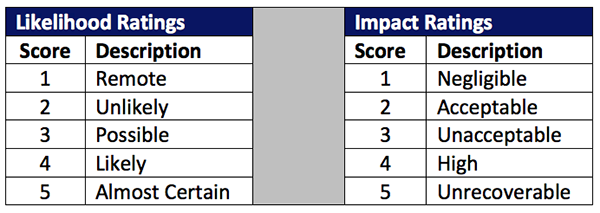

Risk blarney consists of giving the appearance of measuring risk, while not providing any meaningful measurements at all. It is equivalent to saying a lot, with confidence and in a convincing way, and yet not saying much at all. It does not reduce uncertainty. It is ambiguous and leaves ample room for interpretation. It looks something like this:

Risk = (likelihood score) x (impact score)

“Measuring” different risks via scoring:

Risk A = 1 x 4 = 4

Risk B = 2 x 2 = 4

Risk C = 2 x 4 = 8

Risk professional interpretation:

Risks A and B can be put aside because they are both only 4 (i.e., Risk A is both remote likelihood and high impact while Risk B is unlikely and acceptable). However, Risk C is clearly worse since it’s an 8 and 8 > 4. In fact, Risk C is unlikely and high, which is obviously worse than remote and high…

I think (hope) you get the point. Using ordinal labels to “measure” risk is not very meaningful as it doesn’t reduce or express uncertainty.

Besides, Risk A’s 4 may not equal Risk B’s 4. And, one decision maker’s interpretation of 4 may be different than another. Oh, it also doesn’t answer "how much" loss exposure is associated with the ratings.

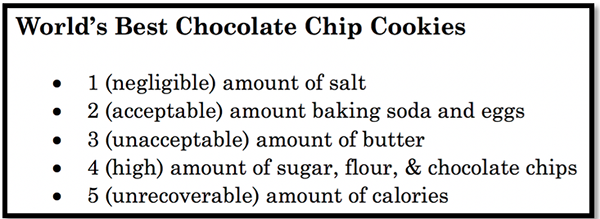

And, in case you don’t believe me, try baking cookies with the recipe provided below that applies Risk Blarney measurement methods to the world of baking:

OK. Enough blarney and malarkey. Let’s talk about....

Risk Measurement

To measure something is to reduce uncertainty. The standard FAIR methodology defines risk as the probable frequency and probable magnitude of future loss. So, putting it together, risk measurement should reduce the uncertainty associated with the frequency and magnitude of future loss.

It should answer how often in the proper units (frequency / probability) and how much in the proper form (monetary or mission impact). It should not simply use ordinal labels or ratings.

Most significantly, it should provide clear and meaningful information to decision makers that will enable them to make well-informed decisions about risk.

Let’s revisit Risks A, B, and C to see how they can change.

Doing a quick-and-dirty measurement via triage*:

Risk A = .01 (1 in 100 years) x $100k = $1k

Risk B = .1 (1 in 10 years) x $1k = $100

Risk C = .1 (1 in 10 years) x $100k = $10k

It’s obvious that $1k ≠ $100. In other words, Risk A is not equal to Risk B. Risk A > Risk B. In fact, it is ten times greater than Risk B. Consequently, they shouldn’t be presented with the same label as this would not inform the decision makers on the variance between the two risks. In short, when risk is truly measured, the measurements reduce the uncertainty surrounding “how much risk is associated with ABC risk” by giving a clear-cut answer. Put simply, risk measurement should provide the amount.

*Note: the above triage is a simplistic example merely meant to illustrate how risk measurement should include an economic underpinning. In risk measurement with FAIR, ranges are used to express uncertainty associated with both the frequency and magnitude figures. For full blown quantitative analyses with FAIR, Monte Carlo simulation and other statistical methods are used.

In summation: I’d encourage forgoing the gift of gab and kissing risk blarney goodbye. Instead, give the gift of risk measurement by implementing FAIR and enabling well-informed risk decisions.

Learn more:

Quantitative vs. Qualitative Measurement for Cyber Risk: What's the Difference?

Gartner Endorses Risk Quantification as Critical to Integrated Risk Management

.png)