A round peg in a round hole

As I mentioned in Part 2 of this series, frameworks like NIST CSF (and PCI DSS, ISO 27xxx, FFIEC CAT, etc.) have inherent limitations regarding their ability to help organizations measure risk, prioritize their concerns, or communicate the true value proposition of cyber security improvements.The good news is that these missing capabilities are where FAIR shines. That said, there are challenges…

But are all the holes round?

I have argued strongly in the past that the value proposition for everything we do in cyber security can be expressed in its effect on risk (i.e., the frequency and/or magnitude of loss). For example:

- Stronger authentication lowers the frequency of illicit access

- Better logging (when combined with effective monitoring) improves the odds of earlier detection and minimizing the magnitude an event, etc.

- Better backups reduce the potential impact from events that destroy or damage data

- etc…

For “loss event” controls like those above it’s relatively easy to measure effect and thus value. Many of the controls we employ however, affect risk indirectly by preventing, detecting, or remediating non-compliant conditions in loss event controls like those above. For example:

- Preventing variance: Clearer, more effectively communicated policies regarding the organization’s authentication requirements reduce the odds that systems and applications will be configured in a non-compliant condition

- Detecting variance: Periodic auditing/testing of the authentication processes and protocols used by systems and applications improves timely detection of non-compliant conditions

- Remediating variance: Timely remediation of non-compliant authentication configurations reduces the duration of non-compliant conditions

These “variance” controls affect risk indirectly by improving the reliability of the loss event controls.

Measuring the value of risk management controls (e.g., risk landscape visibility, analysis quality, root cause analysis, etc.) can be even more challenging because their effect on risk is further abstracted from loss events. For example:

- Having good intelligence about the capabilities, methods, and intentions of threat communities is valuable when setting priorities and defining strategies

- Accurate risk analyses help organizations set appropriate priorities and identify optimum strategies

- Strong root cause analysis enables organizations to identify and correct systemic problems that otherwise waste resources and prevent key deficiencies from being addressed

Essentially, measuring the value of risk management controls requires a broader, more systemic analysis.

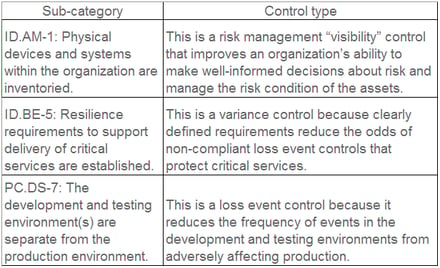

So what does all of this control mumbo-jumbo have to do with NIST CSF? Well, if we examine the framework’s sub-categories, we’ll see they are a mixed bag of loss event controls, variance controls, and risk management controls. For example:

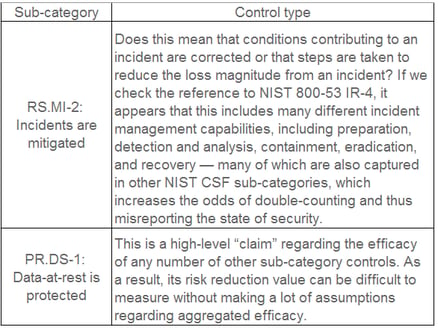

Unfortunately, some of the NIST CSF’s sub-categories are ambiguous enough that it’s hard to know for sure what control function they’re supposed to fulfill. Other sub-categories cover multiple control types, and yet others are higher level condition statements that could encompass many other controls of various types. For example:

The bottom line

Undoubtedly — and this again is not unique to this framework — the NIST CSF sub-categories are the product of brainstorming about which control-like things organizations should have in place. Given the varied nature of these sub-categories as well as the absence of an underlying ontology that captures relationships between the controls (which I discussed in the 2nd post in this series), the odds of an organization accurately measuring and appropriately prioritizing its control improvements are extremely low unless it leverages an analytic method like FAIR. Even then, given the challenges noted above, it can be a non-trivial exercise.

In the next post of this series, I’ll provide an example analysis of the risk reduction benefit from one or more of the NIST CSF sub-categories. Hang in there…

.png)